Support CleanTechnica’s work through a Substack subscription or on Stripe.

The landscape of autonomous driving shifted significantly this week during the XPENG Motors Future VLA Media Experience Day in Guangzhou. The company announced that its next-generation intelligent driving system, VLA 2.0, will begin global delivery in 2027. More importantly for the broader automotive industry, Volkswagen has signed on as the first customer for the technology in the Chinese market.

This partnership marks a pivotal moment in the evolution of vehicle software. Traditional legacy automakers have often struggled to develop in-house full-stack autonomous solutions capable of competing with modern AI-driven players. Volkswagen’s decision to integrate XPENG software signals a new phase of cross-border technical collaboration. VLA 2.0, which stands for Vision-Language-Action, represents a shift away from rigid, rule-based systems toward a more adaptive, human-like artificial intelligence foundation.

The architecture behind VLA 2.0 is designed to overcome the limitations of traditional modular autonomous stacks. Most current systems operate through a sequential pipeline in which visual perception is converted into language-based reasoning before the vehicle executes an action. This structure can introduce latency and limit the vehicle’s ability to generalize across diverse environments. XPENG’s end-to-end vision-to-action architecture removes these intermediate translation layers, enabling perception to flow directly into driving decisions and improving both efficiency and responsiveness in complex traffic.

Early testing shows a 23 percent improvement in driving efficiency. In measured trials during dense evening rush hour traffic in Guangzhou, the system reportedly outperformed traditional Level 2 intelligent driving systems and even some existing robotaxi models. Performance is said to be comparable to experienced human drivers navigating heavy congestion without the hesitation often seen in earlier autonomous software.

Powering this shift is XPENG’s proprietary Turing AI chip. The hardware represents a major leap in onboard computing capability, featuring a 40-core processor built to handle large-scale AI models in real time. Running an end-to-end vision-to-action model without a language-layer intermediary demands significant processing capacity to manage continuous visual data streams. The Turing chip provides that backbone, effectively turning the vehicle into a high-performance AI workstation on wheels.

VLA 2.0 is designed to enable what XPENG describes as a “drive anywhere” experience. This includes campus roads, rural dirt routes, and areas without high-definition maps. The system can manage narrow lane passages, pothole avoidance, and start-from-standstill scenarios, supporting a comprehensive assisted driving experience. By reducing dependence on HD maps, deployment becomes far more scalable globally, eliminating the need to pre-map every road with millimeter precision.

Beyond passenger vehicles, XPENG is positioning the VLA 2.0 foundation model as central to its broader physical AI strategy. The same architecture is being applied across robotaxi fleets, humanoid robots, and modular flying vehicle systems. The objective is to build a unified AI foundation capable of powering intelligent machines that operate safely and predictably in real-world environments, regardless of form factor.

Public road testing of VLA 2.0-equipped robotaxis has already begun. Trial operations are scheduled to start later this year in China, with three mass-produced robotaxi models planned by 2026. These are intended as scale-ready vehicles designed for Level 4 capability. By sharing the same core architecture as consumer cars, XPENG can leverage shadow driving data from its broader fleet to continuously train its robotaxis, creating a feedback loop that accelerates safety and performance improvements.

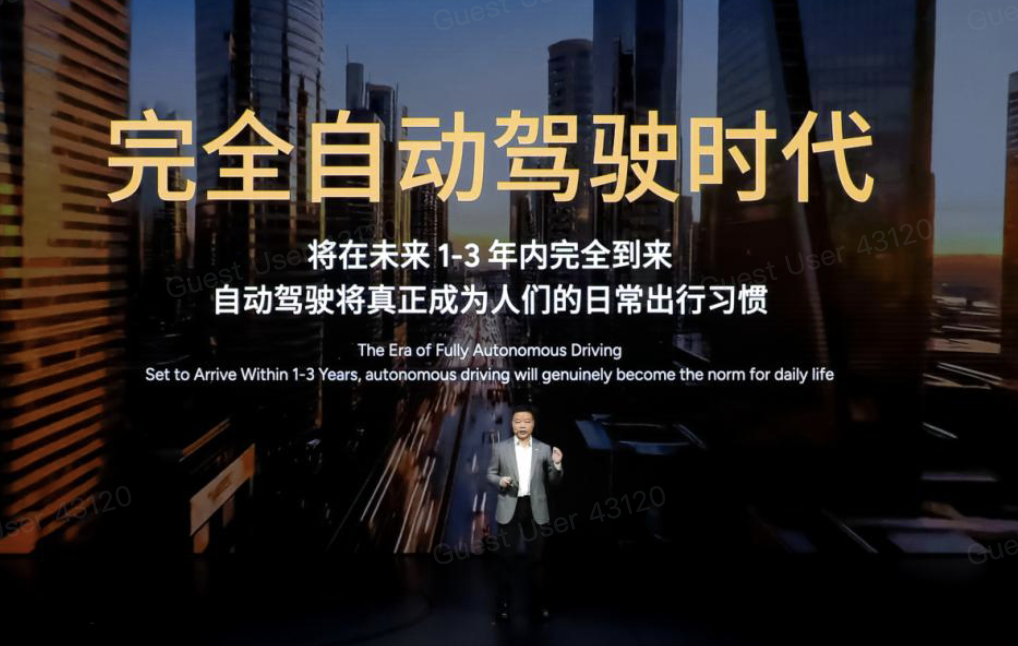

He Xiaopeng, the company’s leader, described this version as the first built to achieve full autonomous driving and said it will iterate at an unprecedented pace. “We believe that full autonomy will arrive within the next one to three years, making autonomous driving a natural part of people’s daily travel,” he said during the event.

The announcement also underscored a shift in training methodology. Rather than relying on predefined rules for specific scenarios, the VLA 2.0 model learns from nearly 100 million driving video clips — equivalent to roughly 65,000 years of human driving experience. This dataset enables the system to anticipate rare or erratic edge cases before they unfold, a crucial capability for driverless taxi services. The model interprets complex road environments and responds with increasingly human-like driving behavior, identifying erratic vehicles, accident scenes, and uneven surfaces while reacting proactively to maintain smooth and dependable operation.

For global markets, the 2027 delivery plan represents a significant expansion. XPENG will initiate international road testing to validate performance across diverse driving cultures and infrastructure conditions. The success of this rollout will likely hinge on the inaugural launch with Volkswagen. If integration proves successful, it could offer a blueprint for other legacy automakers seeking to close software gaps through partnerships with specialized AI firms.

The shift toward an end-to-end vision-to-action system reflects a broader industry movement toward embodied AI. Treating the vehicle as a physical agent that perceives and acts directly on its environment has shown greater reliability than older modular approaches. With VLA 2.0, XPENG aims to elevate both comfort and confidence in intelligent driving, delivering a smoother and more intuitive experience.

As robotaxi trials begin later this year, the industry will closely watch whether the efficiency gains demonstrated in Guangzhou can be replicated in other cities. A 23 percent improvement in traffic efficiency carries clear economic implications for fleet operators, where every minute affects utilization and revenue. If XPENG meets its 2027 global target, the relationship between drivers and vehicles could undergo a lasting transformation. The software-defined vehicle is no longer a distant concept but an emerging reality now extending to some of the world’s largest automotive brands.

Sign up for CleanTechnica’s Weekly Substack for Zach and Scott’s in-depth analyses and high level summaries, sign up for our daily newsletter, and follow us on Google News!

Have a tip for CleanTechnica? Want to advertise? Want to suggest a guest for our CleanTech Talk podcast? Contact us here.

Sign up for our daily newsletter for 15 new cleantech stories a day. Or sign up for our weekly one on top stories of the week if daily is too frequent.

CleanTechnica uses affiliate links. See our policy here.

CleanTechnica’s Comment Policy